Anyone is free to post on the internet, but not everything they share is safe for public consumption. Social media platforms, for instance, must regularly address harmful content that violates their guidelines. And if your business is on one of Meta’s apps, you might need to consider investing in content review solutions.

Social media is just one of the many online channels that require regular monitoring for inappropriate content. There are websites, forums, and online communities where hate speech, spam, and explicit and illegal content spread like wildfire.

If you don’t want your brand to be caught in the flames, read this blog and find out how content review solutions can protect your business.

What Are Content Review Solutions and Why Do They Matter at Scale

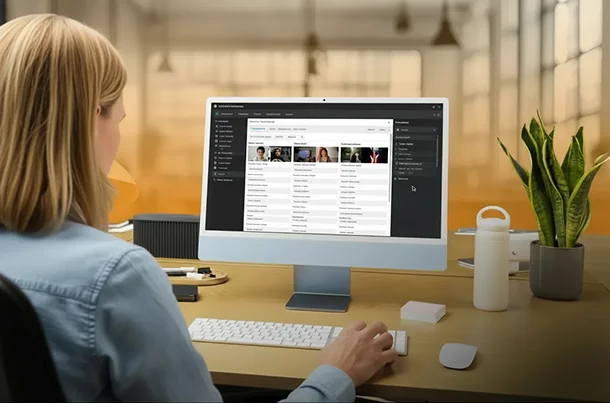

Content review solutions refer to services provided by third parties, which focus on screening and monitoring submitted content to make sure it doesn’t violate community guidelines and policies. The process also involves flagging and removing harmful content that could affect user experience and brand reputation.

One of the main problems of platforms is the volume of content they need to manage every day, especially during peak hours. According to a survey, about 70% of digital platforms struggle with scaling moderation efforts due to the overwhelming increase in content. With limited in-house capabilities, they risk exposing their audience to unwanted online material.

Content review solutions help by offering scalable services, allowing businesses to become flexible even during sudden spikes of activity. This is extremely beneficial during product launches, campaigns, and viral trends where content traffic is expected to increase.

With effective content moderation services, delays can be minimized and the quality of shared content on all digital platforms won’t be compromised.

The End-to-End Content Review Workflow

The content review workflow follows the content moderation process, from content submission to final decision-making. Here’s a simple walkthrough:

- Review of guidelines and policies

Outsourced partners review their clients’ guidelines and policies when it comes to moderating content on their platforms. These define what types of content are allowed or prohibited (e.g., online threats, harassment, self-harm, sexually explicit content, violent imagery, etc.)

- User submits content

Once integrated, they can begin to view user submissions on the platform, whether on social media, websites, or other environments. Depending on the platform, users may share text, send chats, or post images and videos.

- An automated system reviews the content

Content reviewers may adopt pre-moderation or post-moderation for monitoring content. In pre-moderation, all types of content are subjected to approval before being published.

Post-moderation, on the other hand, allows content to be shared before going live. In most cases, an automated system handles this repetitive task by automatically flagging or removing content that doesn’t comply with platform rules.

- Complex cases are escalated to human moderators

In some cases where the content requires more contextual understanding, a human moderator will need to step in to make a fair judgment.

- The final decision is made

After reviewing the appeal, the content moderator will make the final decision to approve or reject the content.

The Role of AI and Automation in Content Review Solutions

When content volume grows beyond what human teams can manage alone, AI-powered tools become a core part of content review solutions. These technologies help platforms process large amounts of content quickly while maintaining consistency across moderation decisions.

Artificial intelligence uses machine learning models trained on historical data to scan text, images, audio, and video. For text-based content, natural language processing detects profanity, hate speech, spam patterns, and policy violations. For visual content, computer vision identifies explicit imagery, violence, or prohibited symbols. This automated screening allows platforms to act on risky content within seconds.

Automation also helps categorize content by severity. Low-risk violations may be removed automatically, while borderline or sensitive cases are flagged for manual review. This layered approach reduces workload pressure on human teams and speeds up response times during high-traffic periods.

Human Moderators and Quality Assurance in the Review Process

While automation handles speed and volume, human moderators remain central to content review solutions. Many content decisions require cultural awareness, contextual understanding, and empathy. This is where human judgment still outperforms machines.

Human reviewers step in when content contains sarcasm, coded language, or sensitive themes that automated systems struggle to interpret correctly. They also handle appeals, where users request a second review after content removal. These cases require careful reading of platform rules and consistent application of policies.

Quality assurance teams support this process by auditing moderator decisions and identifying gaps in guidelines. Their feedback helps standardize decision-making across teams, especially when multiple reviewers work across different regions and time zones.

Scalability, Accuracy, and Compliance Challenges in Content Review

As platforms grow, content moderation services must keep up with rising volumes while maintaining consistency and fairness. Several challenges emerge when moderation operates at scale:

- Balancing speed and decision accuracy

Fast reviews help reduce risk, but rushing decisions can lead to false removals or missed violations. Content review solutions must balance automated filtering with human intervention to avoid poor user experiences.

- Meeting regional and regulatory requirements

Online platforms often operate across multiple regions with different legal standards. Content review teams must apply platform rules while aligning moderation practices with local laws and data protection requirements.

- Managing multilingual and culturally sensitive content

Language differences, slang, and cultural references can affect how content is interpreted. This challenge calls for trained reviewers and localized moderation strategies to avoid misclassification.

- Adapting to evolving content risks

Harmful content formats and tactics change over time. Review processes and detection models need regular updates to keep up with new abuse patterns and platform policy changes.

Conclusion: Why Effective Content Review Solutions Are Essential for Digital Trust

Content review solutions operate behind the scenes, yet they play a direct role in shaping user experience and brand credibility. When automation is combined with human review, businesses can manage large-scale content responsibly without slowing platform growth.

As digital communities expand, having a structured review process helps reduce exposure to harmful content, protect users, and maintain platform standards. For brands operating in fast-moving online spaces, Chekkee offers reliable content review solutions to support long-term platform stability and user trust.