What would the world be like with machines taking over?

It is a slightly disconcerting thought to ponder on, at first. The truth is technology has been nothing but instrumental for several sectors in the present-day community, albeit a confusing riddle to figure out to some.

Businesses who’ve had their hand in digitally marketing their services and extending to the online community are witness to the harmonic teamwork between ai and content moderation.

It is no secret that filtering specific phrases, censoring curse words, and regulating the approval of various user-submitted content are all made faster and more accurate by the power of Artificial Intelligence. But is that all it really has to offer? Can AI fully replace humans? What role does human moderation have to play in light of the expanding capabilities of machine learning in keeping UGC in check?

Moderation is integral in most industries these days, including e-commerce marketplaces, digital forums, and various online platforms and apps.

Posting un-moderated content on the website welcomes various risks like obscene, illicit, and fake content, which might be inappropriate for public viewing. Some companies traditionally relied on human moderation alone. Unfortunately, the growing number of users and market demands gave rise to the need for a more advanced partner to supplement the capabilities of human-powered content monitoring. Businesses began to invest in AI and machine learning strategies to create algorithms that automatically regulate user-generated content (UGC).

What Is AI Content Moderation?

Are you still baffled by the topic on content moderation is AI better than humans.

AI content moderation is an automated tool that helps check UGCs. It has helped reduce the volume of user posts that need to be reviewed daily by scaling and optimizing the process for assessing what people post on forums, pages, websites, and private chats. It offers a versatile approach for making content guidelines more precise.

To make an AI adept at reviewing different types of content, the first thing that must be done is to feed it with information relevant to the community, topics, or limitations that it is designed to moderate. By “educating”, the automoderator is given samples of texts or images of content or topics that are banned or allowed in a social media group, website, page, or digital forum.

Disclaimer: AI does not eliminate the need for human moderators. Humans and AI must work together to provide accurate monitoring and handle the more discreet and sensitive content concerns. AI also helps shield humans from overexposure to psychologically disturbing user activities.

The use of machine learning and advanced tools in content moderation differ based on different types of UGC.

Text

Texts in the form of comments, reviews, replies on forums, blog posts, and even hashtags are the most common form of posts that people from across the world create and share online. Precision in checking text-based user posts involves meticulous natural language processing, or the capability of machines to comprehend the context behind written words of humans.

It is an essential component if you want AI to discern the intent behind various posts of the people who make up your digital community. The human language is quite complex and easily misunderstood without the proper references and resources. You have to consider diverse languages, dialects, slang terms, etymologies, and cultural differences. As such, there needs to be the perfect blend of models for deep learning, machine learning, and computationally modeled human language or linguistics. These systems work together to simplify and detect any hidden meanings, incongruities, similarities and distinctions in worded content. Translating speech to text is also possible.

The libraries embedded in tools for natural language processing enable them to break down, segment, trim down, and analyze phrases, words, sentences down to their very core and simplest or base forms. For instance, AI identifies names of people and places like Biden or Chicago, or pinpoints the intended use of a particular word with multiple meanings. Additional applications of AI-fueled text moderation include detecting spam content through feeding the technology with phrases, terminologies, or words that are typically used in spammy UGCs. More complex uses of automoderation involve understanding the subjective undertones of what people post online.

Content moderation with machine learning helps identify the text's tone and determine whether it conveys anger, bullying, racism, or sarcasm, before labeling it as positive, negative, or neutral. Another function used is entity recognition which automatically finds names, locations, and companies. A further advanced strategy involves knowledge base text moderation. It utilizes databases to make predictions about the appropriateness of the text. The AI can predict or classify the content as fake news or scammer alerts.

Images

AI can review thousands of images through the assistance of a comprehensive and consistently updated internal library. It is capable of imitating how the naked human eye sees and perceives visual content.

Some concrete examples of how AI checks visual content involves rooting out and deducing the objects portrayed in the images. When paired with semantic segmentation, AI can recognize visually represented faces or items, (also called facial recognition technology). It sorts photos with fully naked people, firearms, graphically violent scenarios, or gore. In truth, text moderation is always relevant in revising the images because some images tend to contain words or phrases with inappropriate messages. Also, the tags or words used to describe what the photo is all about, give away the unacceptable nature of the image in question.

Format-wise, technology reduces the time needed to check whether a forum member’s profile photo or banner coincides with the file types, size restrictions and resolutions supported on the website. Duplicated images are discovered in a jiffy, while mages with formats are rejected. Blurry or pixelated photos are deleted, a solution that benefits e-commerce sites. E-commerce platforms are quite strict when it comes to the accuracy of details that sellers indicate in their products.

As for context, the background of an image should also be checked, particularly when it raises suspicion of violating any of the guidelines in the community, website, or page. Say the main focus of a photo is a box, but if you stare at the background colors for a solid minute, you see a carefully concealed middle finger sign. It was expertly disguised by using a hue darker than the picture’s false main subject.

Identity theft is prominent online, and so the combined text and image checking allow advanced tools to find images with watermarks or logos of existing brands and graphic artists.

Video

Video moderation primarily focuses on familiarizing the sequencing of frames in video content. The process makes timestamps more significant in websites and platforms that allow users to produce or share content in the form of a digital recording, a motion picture, or a film. Figuring out the chronological order of each frame is the secret behind how AI gets to understand the subject of a video content while also scrutinizing its quality. It answers the following inquisitions about the quality: Is the video clear, or is it blurry? Is it in a format that is compatible with the platform's framework?

Speech recognition is integral in monitoring videos uploaded by end-users and community members. YouTube’s strict standards disallow the use of swear words, racial slurs, discriminatory remarks, and sexually suggestive remarks or comments, wheher straightforwardly or subtly done, on the videos produced by their content creators.

Before someone reaches out to YouTube to report a content creator for using foul words on their videos, it is possible for AI to be two steps ahead and block the violator in advance.

Talk to our team!

Send Me a Quote

AI Content Moderation Challenges

Content moderation using machine learning is an efficient technology that helps several companies at present. Sadly, like any other advanced tool, AI isn't excused from scrutiny and obstacles. Content moderation poses many challenges to AI models.

Even the best tools available have their own share of imperfections, and so breaking down these discrepancies and shedding light on them is of high importance.

Presently, automated systems with machine learning are a big help in enhancing moderation processes. They act as triage systems pushing suspicious content to human moderators and weeding out anything inappropriate or in violation of the community or pages’ guidelines. AI uses visual recognition to identify a broad category of content, including fishing out user posts with nudity, violence, and/or guns.

The problem with getting machines to comprehend this kind of content is that it essentially asks them to understand human culture—a detail that may be too subtle to be described in simple terms. In the end, no matter what companies do, AI is not spared from making mistakes.

Here is a breakdown of some of its discrepencies. Check out the following content moderation challenges before you decide to employ text, image and video moderation services:

Creator and Dataset Bias

One of the most pressing concerns around algorithmic decision-making across a scope of industries is the presence of predisposition in automated tools. Choices in light of automated tools, including content moderation, run the risk of further marginalizing and censoring groups that already face extreme prejudice and discrimination online and offline.

A report made by the Center for Democracy & Technology said that several types of biases could be amplified by using these tools. NLP tools, for example, are typically used to analyze text in English. Tools with lower accuracy when reviewing non-English text can harm non-English speakers when applied to languages that are not very eminent on the internet.

This reduces the accuracy of any body knowledge used to train AI. The use of such automated tools in decision-making should be limited when making globally relevant and multicultural content moderation decisions. Automated tools for checking text-based content should also be programmed to suit the main language or dialect used in the country or area where it is used.

In addition, researchers' personal and cultural biases are likely to find their way into training datasets. For example, when a library of information is being created, the subjective judgments of the individuals annotating each document can impact what is constituted as hate speech and what specific types of speech, demographic groups, and so on are prioritized in the training data. This bias can be mitigated to some extent by testing for intercoder reliability, but it is unlikely to combat what the majority perceives as a more fitting category for a specific context.

Incompatible Resources

Automated content moderation is a milestone in reviewing online content. As such, AI moderation must be well-equipped with an updated configuration, design, or reference library about the digital community, page, or application it is made to regulate. Imagine applying an advanced moderator with advanced features, such as face detection real-time text-to-speech recognition, or instantly banning videos and user profiles with harmful details on an outdated platform or site. It would be impossible for an AI to perform smoothly within an obsolete environment.

Psychology and emotions

Technology is progressively learning to evaluate qualitative messages in a sentence, video, or picture. At the same time, successfully capturing a user's intent in their statements is still lacking in accuracy and independence. AI moderation is not yet fully developed to adapt to many emotions like humans and subtle hints of disturbing implications. It will take time before AI can do it independently in real-time.

Say, a user excitedly shares happy news to their fellow members and in the moment, drops the F-bomb along with a couple more swear words due to their ecstaticness. The scenario depicts there are some expressions that, at times, cross the border between elation and anger. On its own, the AI moderator will either flag or delete the said post. Meanwhile, a human moderator may hit the pause button on the automoderator and double-check that the user in fact, did not mean to curse their digital comrades.

Another example would be the excessive use of exclamation points and capitalized letters that AI may not detect as a vulgar or blatant display of negative emotions right off the bat. Suicidal hints may or may not be openly identified by robots. Let’s say a person regularly disseminates heart-wrenching poems or quotes about saying goodbye for good. As long as there are no explicitly added texts or objects on any of the posts, the AI will probably not reassess it as a cry for help.

Building public trust

Gaining the public’s trust in the capability of AI is essential. It is unavoidable for doubts to arise, especially if the technology or structure is relatively new to the majority of users who encounter it. Companies must dedicated time and effort in feeding their automated moderators so that it can comprehend the diversity of people and decision-making better. The said challenge can be overcome by regulating routine tests for content moderation systems based on AI.

Impact on Freedom of Speech

According to the AI training data, if a group of online uses use speech that are not interpreted appropriately, there is a probability that they will be alienated and judged by the rest of the community, thereby affecting their freedom of speech. This implies that users will often have their expression rights adversely affected without investigating or understanding why, how, or on what basis.

Unlike humans, AI cannot evaluate cultural context, recognize incongruity, or conduct critical analysis. Even if individuals are informed about artificial intelligence systems' existence, scope, and operation, those systems might baffle and blur lines that separate transparency and suitability from abusing one’s right to self-expression. At present, a full-scale technology with the capacity to match human-powered decision-making and judgment in moderation is yet to be developed.

Inconsistencies

Definitely, AI is faster than human content moderators. Still, it may not be as careful and meticulous.

Take profile photos and images depicting different individuals, for instance. Some faces resemble each other, thereby raising the possibility of wrongly tagging an individual’s profile photo as someone else’s.

People can easily bypass our trusty modern content checkers by using digitally manipulated images. If you’ve ever tried FaceApp, you know how easy it is to alter one’s facial features to look younger, older, or create a version of themselves as the opposite sex.

If an automated moderator’s resources are not consistently fed with brand-new information, then it will have a hard time matching the pace of new lingo, slang terms, and socially relevant trends that go hand-in-hand with the kind of content and community it is tasked to police. Not to mention, users can easily bypass our trusty modern content checkers by digitally manipulating images.

Tricky internet users fill their posts with techniques to bypass content filters. They add special characters such as asterisks and periods in between the letters of prohibited words, or discreetly spell sexual innuendos using the first or last letters of each word in a seemingly harmless sentence. These simple manipulations are enough for an AI to give these UGCs the coveted green light.

Cyber-vulnerability

One of the disadvantages of AI is that it becomes powerless and inefficient when bugs, scammers, and power interruptions enter the picture. A highly compromised moderation database puts a website and its users at risk.

Bugs can cause the software to slow down or malfunction amid an influx of content to be checked on the moderator’s end. Worse, hackers are constantly lurking around an app or site, waiting for the perfect timing to strike and disable advanced moderation methods.

If you don't protect your mod robots from these digital attacks, your brand and supporters will experience more severe damage in the future.

The partnership of AI and Human Content Moderation

There will always be a comparison between artificial intelligence and content moderation. On the bright side, the differences between humans and technology are also the key elements that harmonizes the strong and weak points of each.

Technology was created to improve life in general. Humans must do their part to nurture what they have produced, so that any advanced tool continually functions aptly and meets their expectations,.

How can humans and AI work in perfect unison when regulating content online?

For starters, recent news revealed Zuckerberg's new addition to his team's content moderation algorithms: Conflict control on various Facebook groups. To do this, the social media behemoth's AI moderator will use several signals and clues from group conversations.

These signals are programmed and structured to indicate continuous conflict among members. In response, the confrontation is immediately brought to the group moderator's attention to implement the appropriate action plan as soon as possible.

It is a little early to conclude its efficacy, but the premise is already promising. It also serves as an excellent representation of human partnership with AI.

AI for speed, humans for verification

Human moderators frequently experience burnout at work, particularly when AI was still under development and had limited capacities in monitoring user-generated content. At the same time, AI was yet to play a more prominent role in monitoring what people share across websites and forums. Their responsibilities involved scanning for spam, sifting through thousands of profile images, and evaluating flagged posts. In the long run, speed and quality were both compromised.

With AI now in the picture, the volume of content that needs to be checked will eventually be reduced. For example, new profiles come by non-stop on online dating sites. The role of the bots is to detect the subjects on each profile photo and highlight any text that violates the website's guidelines. Then, human moderators will follow up on these discrepancies and decide whether to approve or ban a member.

But what if someone uses a celebrity's image as their account's default photo? Suppose the AI's stored data is not fully updated to identify the star whose identity is stolen and assumed by a scammer. In that case, the latter gets to roam the online community freely. On the other hand, humans can recognize famous individuals in a heartbeat and stop the unauthorized utilization of these images.

Scammers and spammers are notorious for recycling their lines. They repeatedly shared paragraphs that have the same line. Have you ever checked the comment section on an article shared on Facebook? Repeatedly shared paragraphs with introductions that go, “Mr. X changed my life!” and “I never thought it would work, but…” are surefire signs of spam. Bots and non-robotic moderators work together by simultaneously detecting these comments, deleting them, and, if needed, banning the user for good.

In some instances, predators use photos from a minor or underaged individual’s social profile. It is crucial that humans intervene and ensure that young, underage individuals are well-protected across all digital platforms they can access.

For copyright infringement and scams, AI may be programmed to determine repetitive patterns on fraudulent schemes as well as alert their human counterparts of any duplicated and illegally reproduced content. Human moderators may conduct additional research, including verifying the IP addresses of suspicious users and aid in locating where they are based.

Another excellent way for AI and humans to team up is through shadow banning. When an individual is shadow banned on a digital group, their profile and content are not readily visible to other members. It is disguised from public review without them knowing. The phenomenon is effective for trolls who live for the drama.Once they find out that their (partially removed) comments are receiving zero reactions, they will soon grow tired and waste people’s time elsewhere.

Content is not the only thing that moderators can keep a keen eye on. Even user activities have no escape. Some companies hire both types of moderators to check the engagements of their members based on their accounts. They do this to prevent any abuse and misuse of their services or features. For instance, individuals with paid accounts may be covertly selling their profiles for extra profit. Or, users with free accounts might try to imitate the actions of paid users to try and trick the system into giving them access to exclusive services.

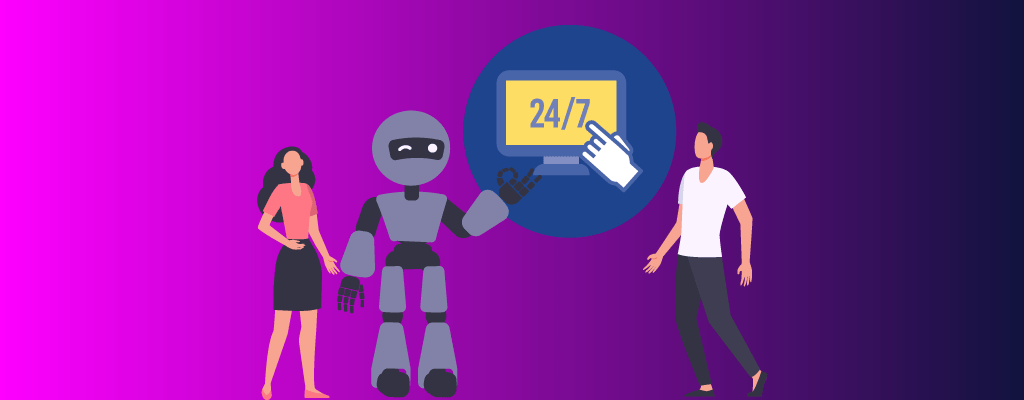

Balance between workload

In the heat of internet misconduct, human moderators reach out to the violators and start dialogues to correct their errors. As a result, people will feel that there are actual humans if the regulations are imposed on their unacceptable content. Alternatively, bots share the burden of supervising a salad bowl of communities and member posts. The term 24/7 becomes all the more reasonable because once one of your non-AI moderators ends their shift, AI will be there to pick things up right where the former stopped.

More importantly, if technical difficulties are encountered while implementing advanced moderation algorithms, then a dependable network of experienced moderators can always take charge and fix the inaccuracy.

Conclusion

AI has generally changed and enhanced the lives of everyone; there is no question about that. People, businesses, and even countries across the globe have become connected. People’s homes and work environments have become more innovative, and the way entrepreneurs do business has become more proficient. Yet some companies still doubt how machines can help attain their goals because of its limited cognitive skills and critical thinking ability.

The question remains:

Is ai content moderation better than humans?

Well, it is—in some aspects, but it can never fully replace humans. That’s not to say that humans will survive without technology.

Chekkee can attest to that. Our company offers highly skilled and expert moderators together backed by the impressive feats of AI-powered content review. We check images, videos, and texts with consistently updated machine learning tools. Chekkee has earned a reputation for professionally regulating online communities, forums, websites, social media pages, and all types of UGC you can think of.

If customized ai content moderation is what you need, then we’re the perfect team to assist you with that!Send us an inquiry so we can fill you in!